Guy Zaidner and Dr Mitch Pryor are researchers from The University of Texas at Austin who have developed Vaultbot – a Husky-based robot built to improve situational awareness in the teleoperation of dual-arm mobile robots, specifically in hazardous and uncertain environments.

The Challenges of Robot Teleoperation

When it comes to hazardous and uncertain environments, using a robot instead of a person seems like a great idea. However, indirect teleoperation – the act of controlling a robot remotely – is often very challenging due to the lack of proper visual feedback. For example, video feeds are often low resolution with low FPS (frame per second), have limited FOV (field of view) and suffer from high latencies.

There are many challenges when it comes to teleoperating a robot. To begin with, the operator needs to be equipped with various wearable devices such as a head mounted display (HMD), as well as force and haptic feedback devices. While these devices may increase the amount of information an operator is able to take in, they can also give the operator motion sickness.

Another issue is that operating a robot using a standard 2D monitor can be complicated due to the lack of perceived depth and limited field of view (FOV).

The last issue that has been outlined in this research is the lag or the round-trip latency between the operator and the robot which may lead to unstable control of the robot. A latency higher than 50-100 ms may cause the operator to think that the system is not responding and then re-execute the order which will cause an overreaction.

All of these issues are what lead to a Situational Awareness (SA) problem, which the team defines as “the perception of environmental elements and events with respect to time or space, the comprehension of their meaning, and the projection of their future status.”

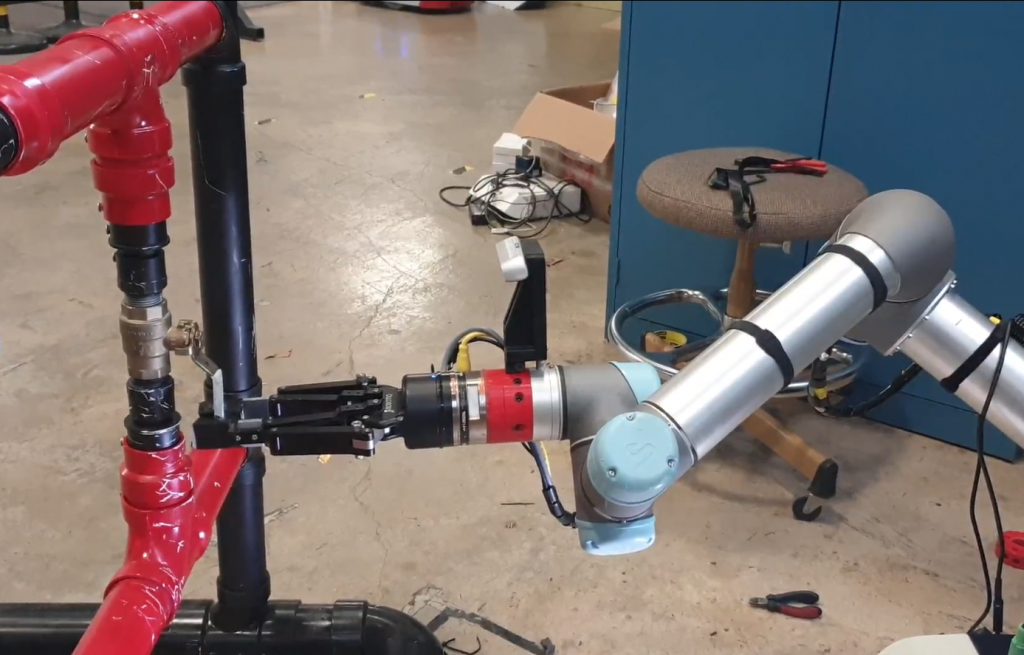

Side view of one of the teleoperated Vaultbot arms

Vaultbot to the Rescue

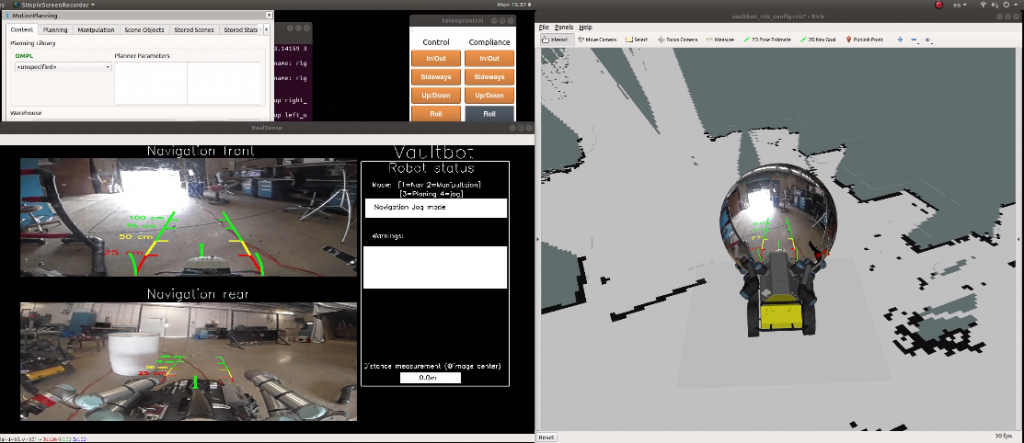

By creating a task based graphical user interface (GUI), which incorporated principles from SA theory and the psychology behind motion perception, the research team has been able to address these common problems.

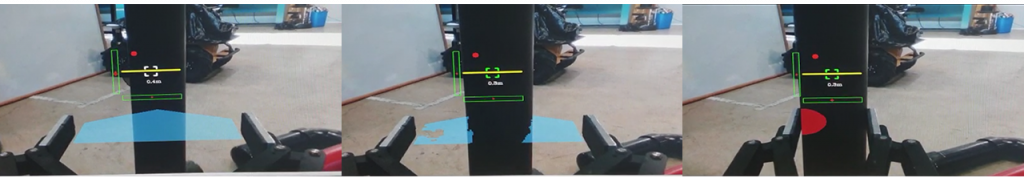

For remote navigation, an integrated screen shows the environment in two levels: one, using a 360° camera, the operator can see and explore the environment from a “driver” point of view on a spherical image. Second, by zooming out, the operator sees the robot on a map (bird’s-eye view). While moving the robot, the user sees the velocity commands that have been sent to the robot, so the user may expect the robot to move after a period of time with more confidence. By switching to manipulation mode, the user sees a different screen which shows an image from the gripper point of view. A dynamic overlay, which constitutes a virtual grip plane, provides the depth perception only at the relevant region of interest. When the object is close enough for grasping, the object will override the virtual surface.

For the kinesthetic problem, the researchers used a “point pattern” that shows the relative position of the end effector and the robot base alongside kinematic boundaries to prevent singularities. The image from the gripper changes according to the rotation and translation of the gripper for better spatial perception. After grasping an object, the user sees an indication of the applied forces on the object which allows the user to regulate the contact forces.

To address the latency problem, the user sees both the velocity commands from the input device and a simulated arm that is aligned with the real arm that moves with less latency.

Linear perspective lines view

Gripper view with 2D depth and forces sensing

The Technical Makeup of Vaultbot

The Vaultbot is built on Husky UGV and has two integrated UR5 collaborative robot arms. The robot also has the following sensors: UM6 inertial measurement unit (IMU), SICK LMS511 2D LIDAR, NetFT force torque sensor, Intel RealSense D435 cameras and two 4K Kodak PixPro cameras.

The robot runs on two synchronized ROS melodic masters which run on separate machines with Ubuntu 18.04 OS. One master is responsible for the entire robot except the cameras, while the other master is fully dedicated to the onboard cameras. The motivation for this setup was to provide a safe solution since Vaultbot was designed to work in hazardous environments. In the event that the robot computer requires a remote reboot due to malfunction, the cameras will stay on and will continue broadcasting.

The Vaultbot is equipped with UM6 inertial measurement unit (IMU), SICK LMS511 2D LIDAR, NetFT force torque sensor, two integrated UR5 collaborative robot arms, Intel RealSense D435 cameras and two 4K Kodak PixPro cameras

“Working with Husky UGV has been a really great experience. Both the hardware and software are stable for extended periods of time. The integration of ROS, software and sensors couldn’t be more easy.” said Zaidner.

Why They Chose to Build on Husky UGV

The researchers needed a mobile platform that was reliable since it was going to be working in dangerous environments and also robust so that they could add more sensors and equipment, both of which they found with Husky UGV.

“Working with Husky UGV has been a really great experience. Both the hardware and software are stable for extended periods of time. The integration of ROS, software and sensors couldn’t be more easy.” said Zaidner. Furthermore, Clearpath has proven itself to be a reliable and stable vendor assuring that any developed capabilities can be reproduced using their commercially available hardware. Clearpath was instrumental in helping UT Austin develop dual-arm mobile manipulator from commercially available components with a high probability of long-term support.

Vaultbot In The Wild

The Vaultbot has been used to support funded projects from Los Alamos National Labs, Savannah River National Labs, and several O&G industrial partners including Woodside.

The Vaultbot has also been featured in various publications related to use in nuclear environments and has been featured by IEEE Spectrum. The manuscript related to the featured situational awareness capabilities is currently under development.

Click here to learn more about Husky UGV.